Start Your 3D Journey in Cinema 4D

Master the essentials of 3D modeling, lighting, and animation in C4D. Enroll in All-Access to unlock C4D Basecamp and 50+ other courses.

How Perception collaborated with Pixar Animation Studios on the main on end titles for “Lightyear.”

Directed by Angus MacLane, Disney and Pixar’s “Lightyear” is a sci-fi adventure comedy that gives “Toy Story” fans the definitive origin story of Buzz Lightyear, the legendary Space Ranger who inspired the toy. The film was Pixar’s 26th animated feature and includes three post-credit scenes that shouldn’t be missed.

The main on end title sequence was also beautifully designed and animated by Perception in collaboration with Pixar Animation Studios. Perception is an Emmy-nominated studio known for creating cinematic title sequences for Marvel Studios’ “Black Panther,” “Avengers: Endgame,” “WandaVision” and many more.

We talked with Perception’s Chief Creative Director Doug Appleton about the making of the “Lightyear” titles, and how the studio’s team used Cinema 4D, Redshift, After Effects and Nuke to celebrate Buzz Lightyear’s iconic green and white suit and the technology of the Lightyear universe.

Tell us about how Perception approached collaborating on the title sequence.

Appleton: I don’t think anyone here ever thought we’d get the chance to work on a Pixar movie, let alone a Pixar title sequence. Everything is done in-house over there, and we are the first outside vendor they’ve ever worked with, which is crazy to think about.

It was a dream come true, but we knew our approach had to be the same as everything else we do because we’d be doomed if we thought a lot about the project being the opportunity of a lifetime. Of course, it wasn’t just another title sequence, though. On our first call with them, I had my Buzz Lightyear mug next to me and my Buzz Lightyear toy on my shelf, both of which I’ve had for years. It was a surreal moment.

Pixar’s brief explained that they wanted “Lightyear” to feel like a big-action, sci-fi film with a Marvel-style title sequence. They had watched a bunch of Marvel sequences and realized that Perception had done most of the ones they liked, so they got in touch with us.

Say more about your collaboration and how you decided to focus on Buzz’s suit.

Appleton: Angus the director, is really into sci-fi, so in our first pitch we showed the teaser trailer for “Terminator 2,” where the T-800 is going through the factory being made and you’re looking at all of the different pieces coming together. That really resonated with him, so we started talking about what a Buzz Lightyear version of that would look like and took it from there.

Since we were doing the end titles, we decided it would be better not to focus on the building of the suit. Instead, we brainstormed how we could get that same feeling without literally watching the suit being built and landed on the idea of etching the titles into it.

We went back and forth a lot and nailed the idea to have it feel almost like the larger-than-life suits are a landscape that we travel over. That initial concept didn’t change much, which was great because the original frames we put together ended up being incredibly close to the final.

We talked to the Pixar team probably once a week early on, and then about every other week during production. It was an incredible collaboration. We checked in, showed them rough cuts, and did the whole review based on camera moves. We talked with Angus about how it could look practically shot or be more of a floaty motion graphics-type camera move. He loved the idea of it feeling more practical, and we came up with a motion-control arm kind of rig as a visual solution.

We never showed up saying, ‘this is the way it’s going to be,’ and Angus never did that either, even though he had every right to do that as the director. He would always ask us if we liked an idea and, if we didn’t, he was very receptive. I can’t speak highly enough about how collaborative our relationship with the Pixar team was. They really wanted a partnership on this thing.

What was your process for the etched-looking titles?

Appleton: At first It was very worrisome, talking about etching something into the models because things can change very quickly with credits. If we modeled the names into the geometry, it could be a real problem when we needed to add a new one or update one. That stuff always happens throughout the process, so we were nervous and needed to come up with something that could be changed easily.

We decided to UV only the section with the name on it and render it out as a separate piece. That allowed us to do all of the names as black and white passes that drove displacement and bump map values and gave us more control over uniform sizing for the names. Multiple passes included the base images and additional passes for the heat that comes off the laser when it goes from red to cool.

One tricky part in Cinema was lining up the laser with the leading edge of the type as it was being etched. We created a fourth pass that was just a sliver of the leading edge of the etching, and it was piped into our light to create the laser beam and bridge that gap.

It took a while to figure that out but, once we put it all together, it all worked surprisingly well. When we needed to update something, we’d update an image sequence, and all the details would just fit. Everything was rendered in Redshift, comped in After Effects and our final delivery came out of Nuke, so we could embed mattes into the EXRs.

How did you get the movement around the suit that you wanted?

Appleton: We worked a lot with the director on that. Angus started off as an animator, so he had a lot of great feedback. I feel like we got a Pixar crash course in camera animation, which took things to a whole different level. At one point we were on a call sharing a screen with him and he drew all over our timelines to show us how he would smooth out transitions. It was an incredibly rewarding experience, and he was saying, ‘I’m so sorry I’m drawing on your screen guys,’ and we were like, ‘oh no, thank you so much.’ It was great.

What else can you tell us about the project that we haven’t talked about?

Appleton: One of the things I found really fun was the stereo process. People don’t think about that much because, most of the time, we don’t think of rendering in stereo. It’s really just the film work where you have to worry about stereo renders. We’ve worked on a few movies that were being done in stereo, but Pixar does it differently so there was a learning curve.

On a typical film, we would render out the left eye, our main 2D render, and that goes into the 2D movie. For the stereo version, we would render a right eye with a parallel camera. Then, in the edit, we would dial in the depth with the left and right eye renders.

Pixar does everything in camera, so when they do stereo, they determine that depth in their renders. We had to change our workflow to match that approach, so instead of a parallel camera we used an off-axis camera, which allowed us to dial in our depth in the render. That meant we had to go back into Cinema to render a new right eye for any minor refinements to the depth.

Because of that, we started including padding on the sides of our renders, so we could still have some room to move the renders in the edit and further refine the depth. Whether we go back to using parallel cameras or off-axis cameras, we will probably still render everything with padding from here on out.

I can’t say enough what a privilege it was to get to work with Pixar. They opened their arms and invited us to play in their world, and were so generous with their time, especially Angus. It was a truly unique experience, and I wish we could do it again ten more times.

Meleah Maynard is a writer and editor in Minneapolis, Minnesota.

ENROLL NOW!

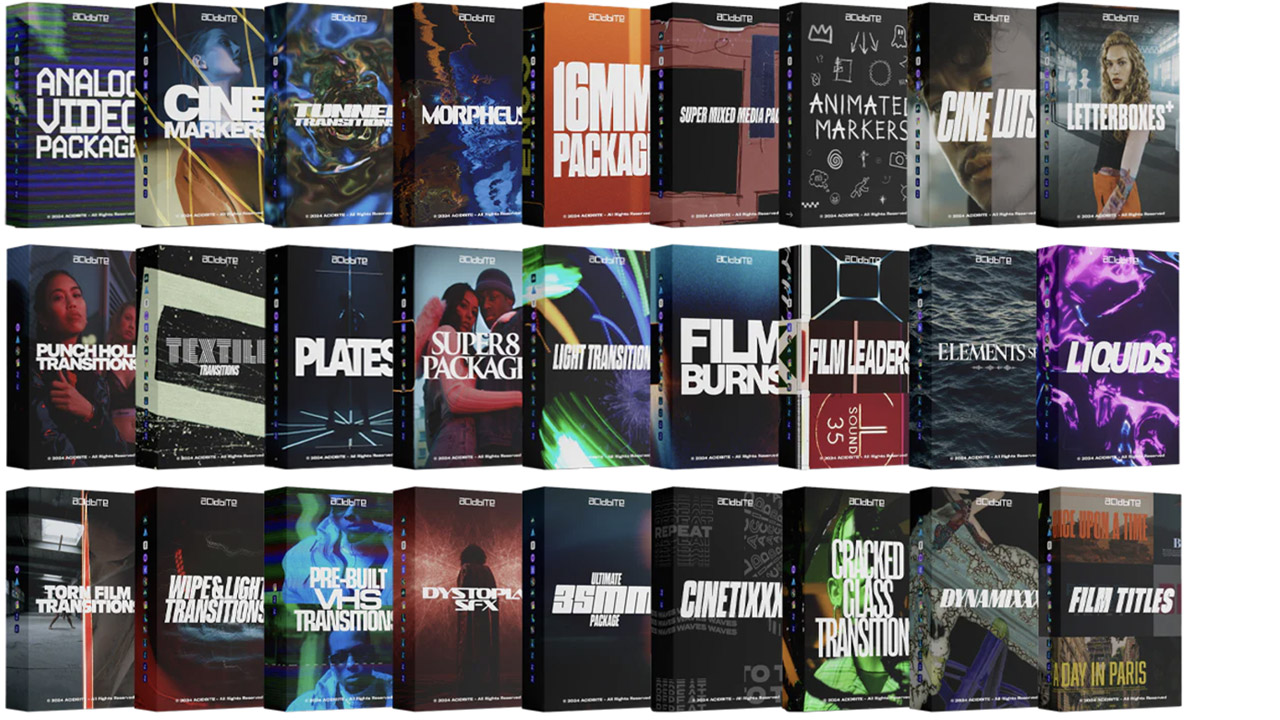

Acidbite ➔

50% off everything

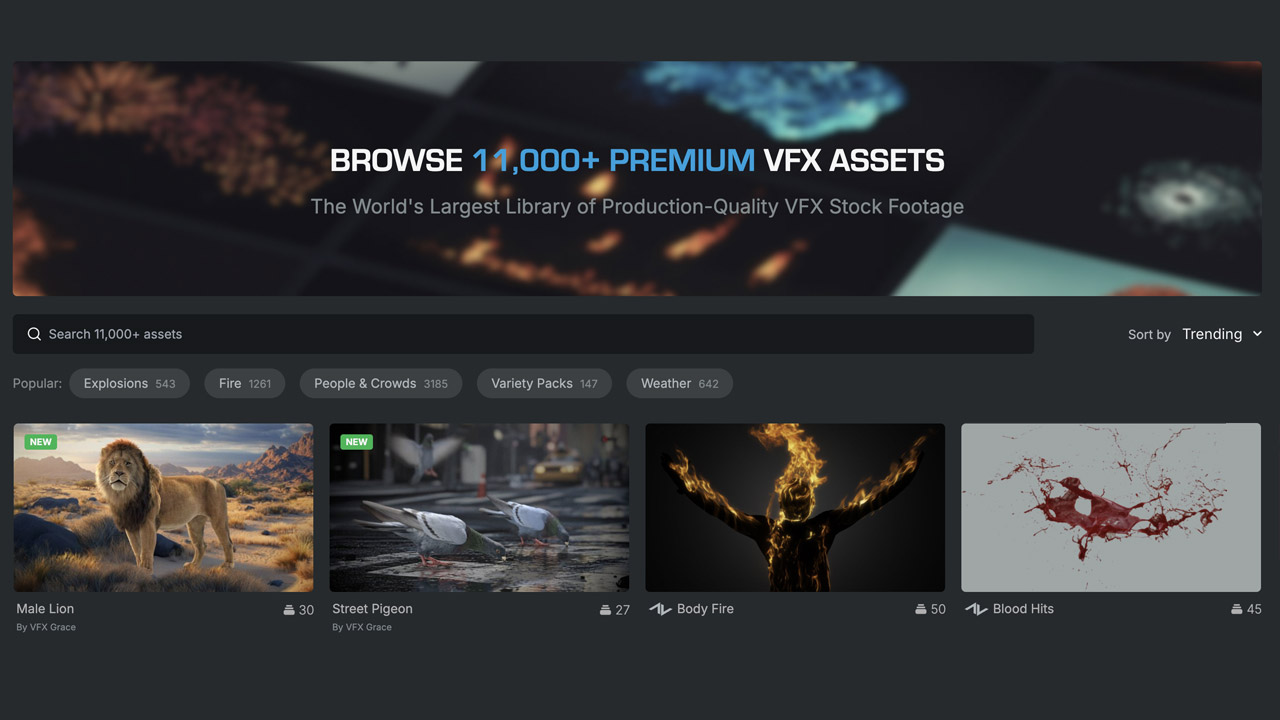

ActionVFX ➔

30% off all plans and credit packs - starts 11/26

Adobe ➔

50% off all apps and plans through 11/29

aescripts ➔

25% off everything through 12/6

Affinity ➔

50% off all products

Battleaxe ➔

30% off from 11/29-12/7

Boom Library ➔

30% off Boom One, their 48,000+ file audio library

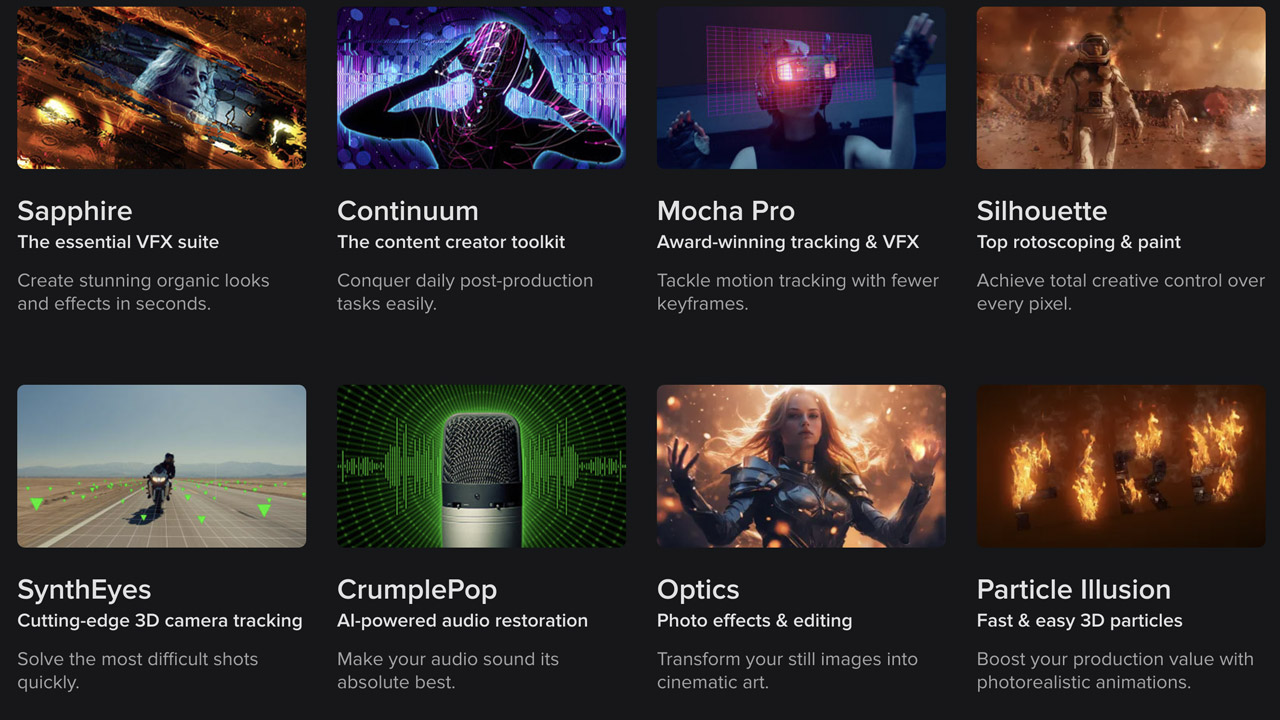

BorisFX ➔

25% off everything, 11/25-12/1

Cavalry ➔

33% off pro subscriptions (11/29 - 12/4)

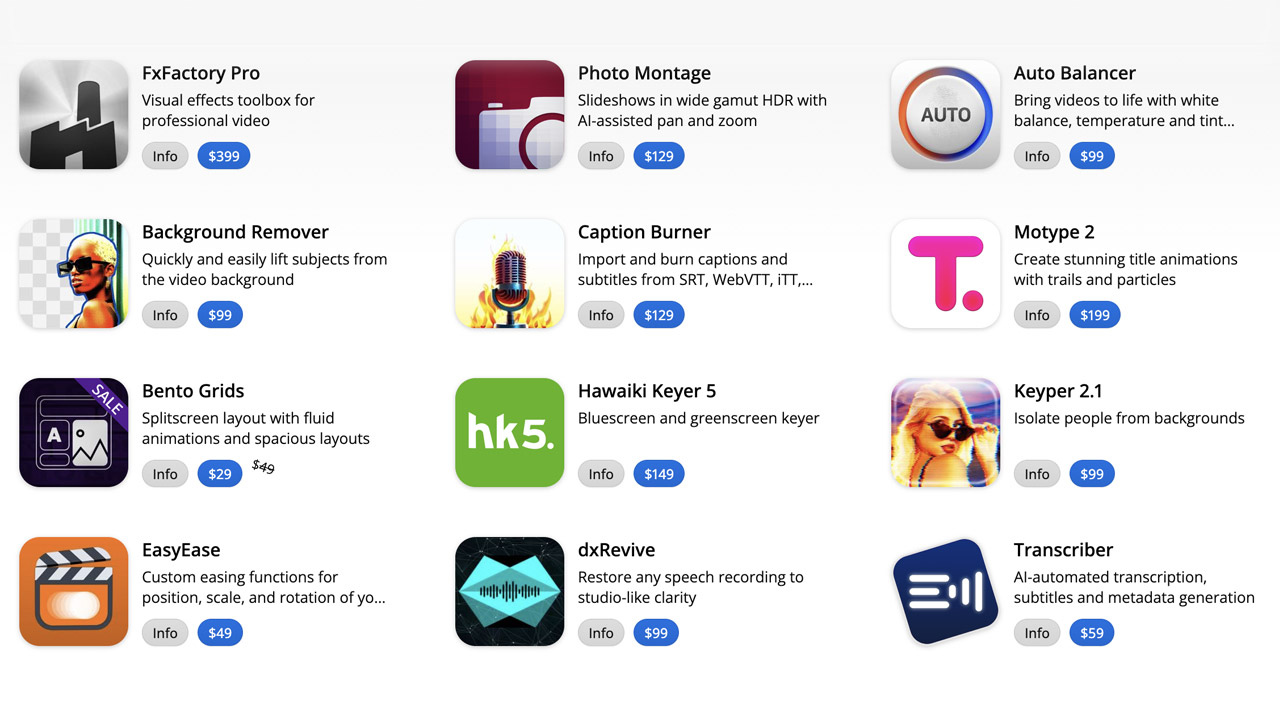

FXFactory ➔

25% off with code BLACKFRIDAY until 12/3

Goodboyninja ➔

20% off everything

Happy Editing ➔

50% off with code BLACKFRIDAY

Huion ➔

Up to 50% off affordable, high-quality pen display tablets

Insydium ➔

50% off through 12/4

JangaFX ➔

30% off an indie annual license

Kitbash 3D ➔

$200 off Cargo Pro, their entire library

Knights of the Editing Table ➔

Up to 20% off Premiere Pro Extensions

Maxon ➔

25% off Maxon One, ZBrush, & Redshift - Annual Subscriptions (11/29 - 12/8)

Mode Designs ➔

Deals on premium keyboards and accessories

Motion Array ➔

10% off the Everything plan

Motion Hatch ➔

Perfect Your Pricing Toolkit - 50% off (11/29 - 12/2)

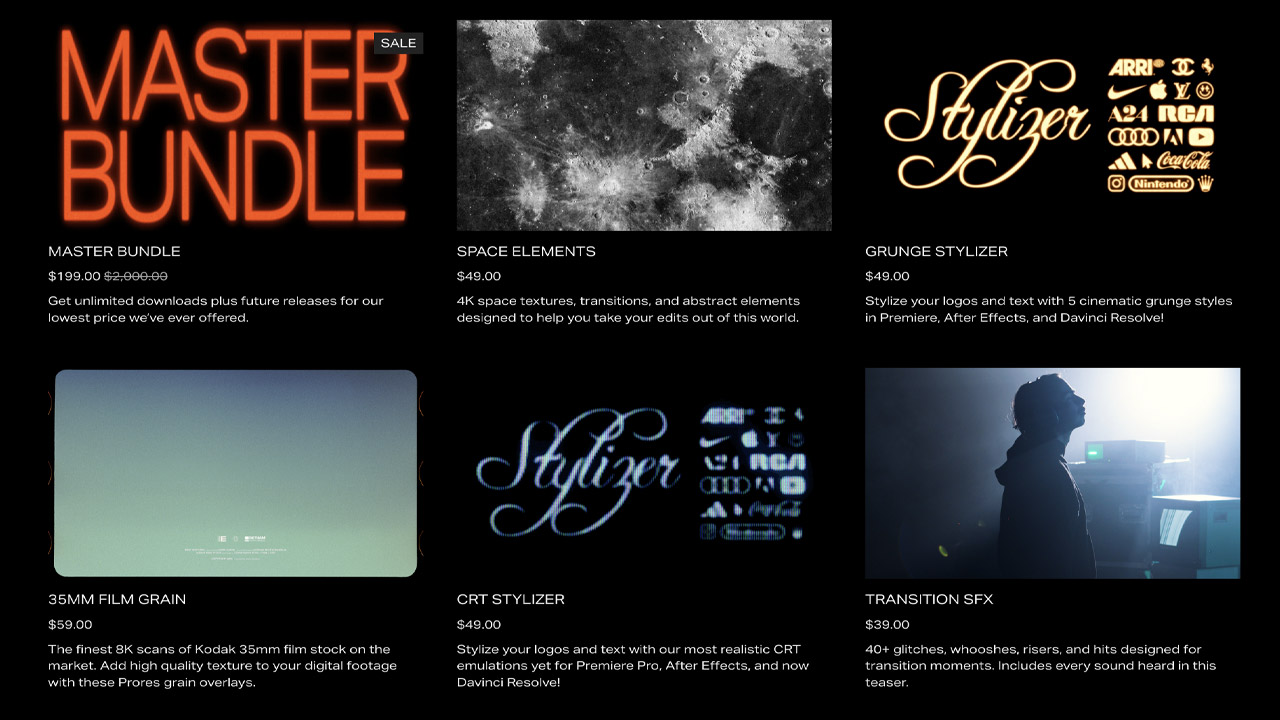

MotionVFX ➔

30% off Design/CineStudio, and PPro Resolve packs with code: BW30

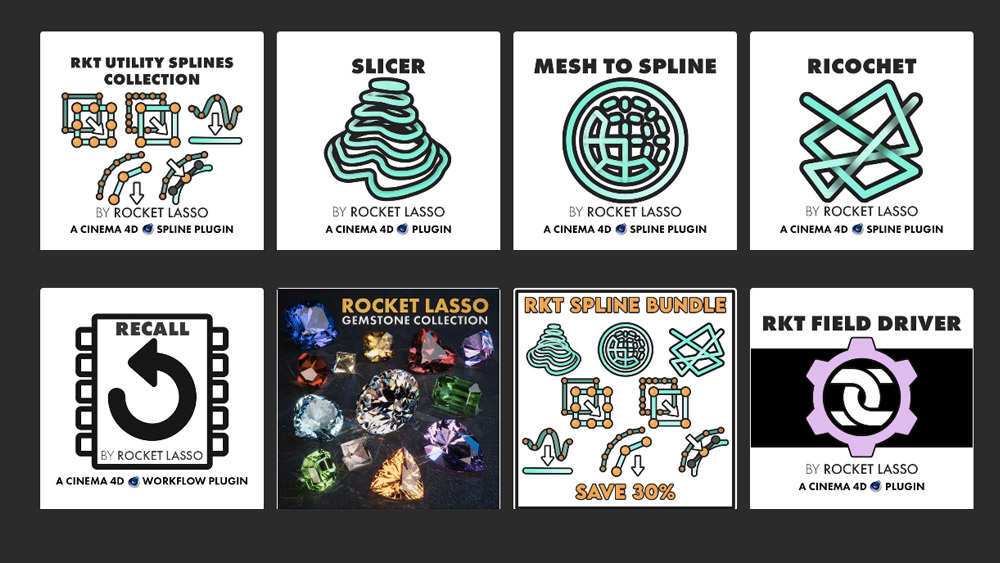

Rocket Lasso ➔

50% off all plug-ins (11/29 - 12/2)

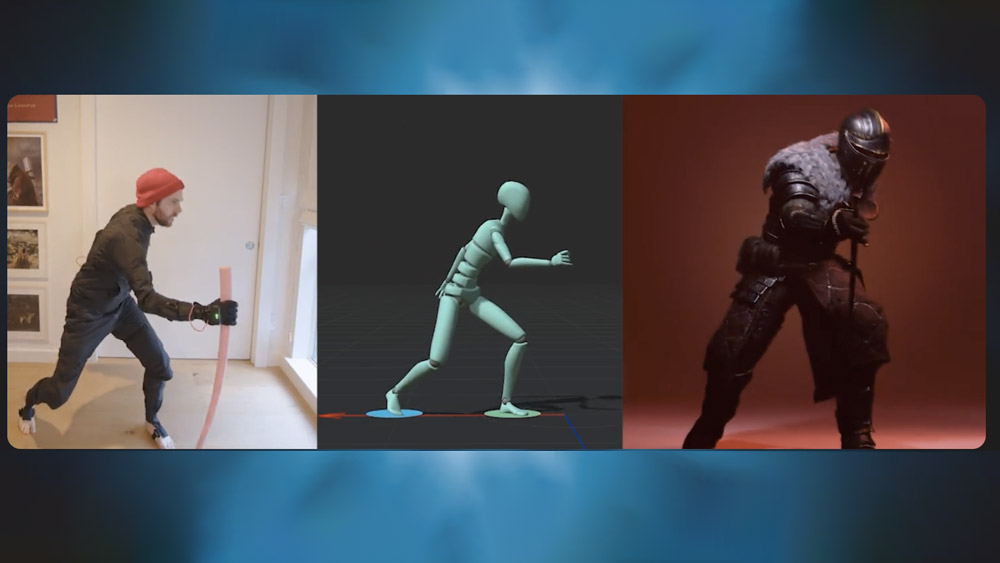

Rokoko ➔

45% off the indie creator bundle with code: RKK_SchoolOfMotion (revenue must be under $100K a year)

Shapefest ➔

80% off a Shapefest Pro annual subscription for life (11/29 - 12/2)

The Pixel Lab ➔

30% off everything

Toolfarm ➔

Various plugins and tools on sale

True Grit Texture ➔

50-70% off (starts Wednesday, runs for about a week)

Vincent Schwenk ➔

50% discount with code RENDERSALE

Wacom ➔

Up to $120 off new tablets + deals on refurbished items

Start Your 3D Journey in Cinema 4D

Master the essentials of 3D modeling, lighting, and animation in C4D. Enroll in All-Access to unlock C4D Basecamp and 50+ other courses.